My Contribution

During the project, I was working as a UI/UX Designer and Scrum Master.

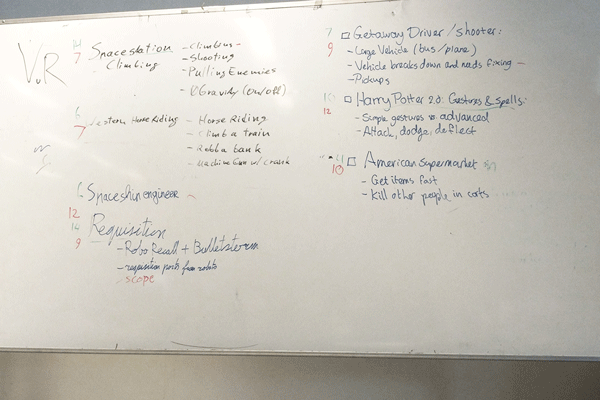

My tasks on this project included:- Creating a concept with the team based around a project brief

- Pitching the game concept

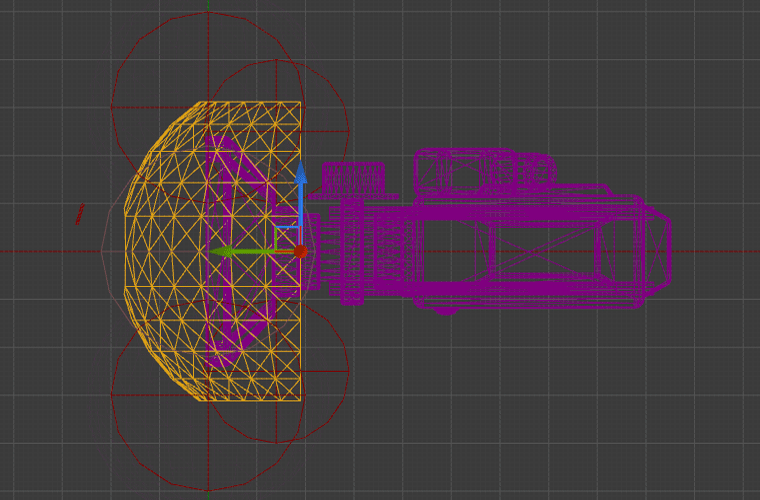

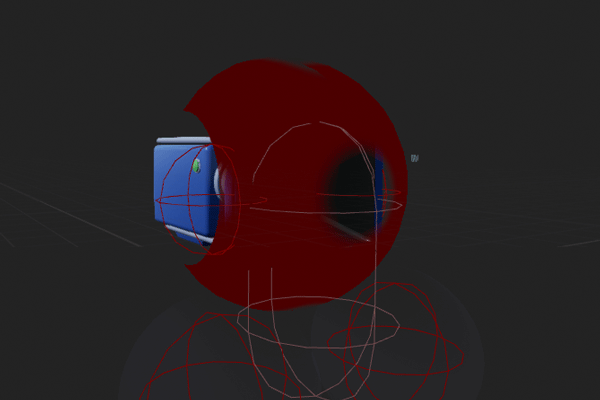

- Researched and balanced movement to prevent motion sickness.

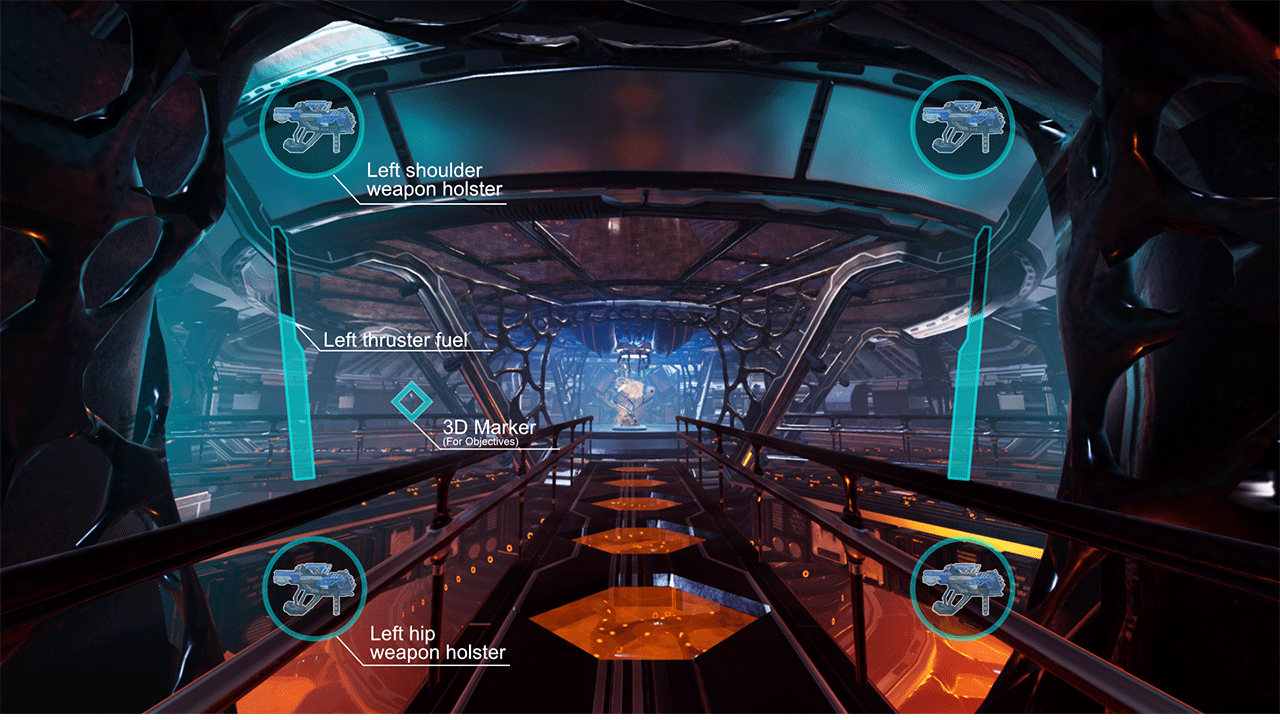

- Researched, designed and implemented UI/UX elements like weapon holsters, bloody screen

- Gained experience on how to implement and balance haptic feedback in UE4.

- Facilitated agile development in the team using scrum and JIRA.

All concepts we brainstormed and pitched within the team.